With the rapid development of technology, organizations’ data management strategies are undergoing a fundamental transformation. In today’s world, where data-driven decisions are critically important, organizations need more agile and efficient data processes. This is where the concept of DataOps (Data Operations) comes into play. DataOps helps organizations overcome challenges in their data journey while improving data quality and providing businesses with a competitive advantage.

Core Principles of DataOps

DataOps can be defined as a methodology that combines software development methods with data management to improve data analytics processes. DataOps brings together different stakeholders in the data ecosystem – data engineers, data scientists, and business analysts – under a common framework. This methodology aims to increase the automation, quality, and speed of data processing.

Automation

Perhaps the most important principle of DataOps is the automation of data processing. Automating manual operations reduces human error and ensures reproducible results. For example, automating processes such as data integration, transformation, and quality control enables data analytics processes to be carried out much faster and more reliably.

According to Gartner’s 2023 report, companies using automation in data operations can reduce data processing times by up to 60% compared to competitors who rely on manual processes.

Collaboration

DataOps encourages strong collaboration between different teams. In the traditional model, data teams, business units, and IT departments often work in isolation. DataOps eliminates these silos, enabling all stakeholders to work together toward common goals. This collaboration ensures that data projects are better aligned with business objectives and produce more valuable insights.

Continuous Integration

Continuous integration principles, inspired by the software development world, are one of the cornerstones of DataOps. Automatically testing and integrating changes made to data processing into the main data flow allows errors to be detected early and more reliable data products to be created.

Continuous Delivery

Continuous delivery, another important principle of DataOps, ensures that data products (reports, dashboards, analytical models, etc.) are delivered to end users quickly and securely. This approach accelerates the value creation process in data analytics and allows business units to make more agile decisions.

Scalability

Scalability is critical in modern data ecosystems. DataOps ensures that systems and processes can grow seamlessly as data volume increases. The adoption of cloud-based technologies and distributed processing systems plays a major role in implementing the scalability principle of DataOps.

Differences Between DataOps and DevOps

Although DataOps is inspired by the DevOps methodology, there are significant differences between them. Understanding the similarities and differences between these two approaches is critical to implementing DataOps more effectively.

Similarities

Both methodologies embrace automation, continuous integration/continuous delivery (CI/CD), and agile methods. Additionally, both approaches aim to strengthen collaboration between technical teams and business units.

Focus Areas

While DevOps focuses on software development and operation processes, DataOps focuses on data processing, analytics, and data science processes. DevOps typically deals with application code, while DataOps deals with both code and the data itself.

Application Areas

DevOps typically covers the development and distribution of software products. DataOps addresses all stages of the data lifecycle, such as data collection, cleaning, transformation, analysis, and sharing of results.

DataOps Implementation Steps

There are some basic steps to follow for successfully implementing the DataOps methodology. These steps ensure a systematic transformation of data management processes.

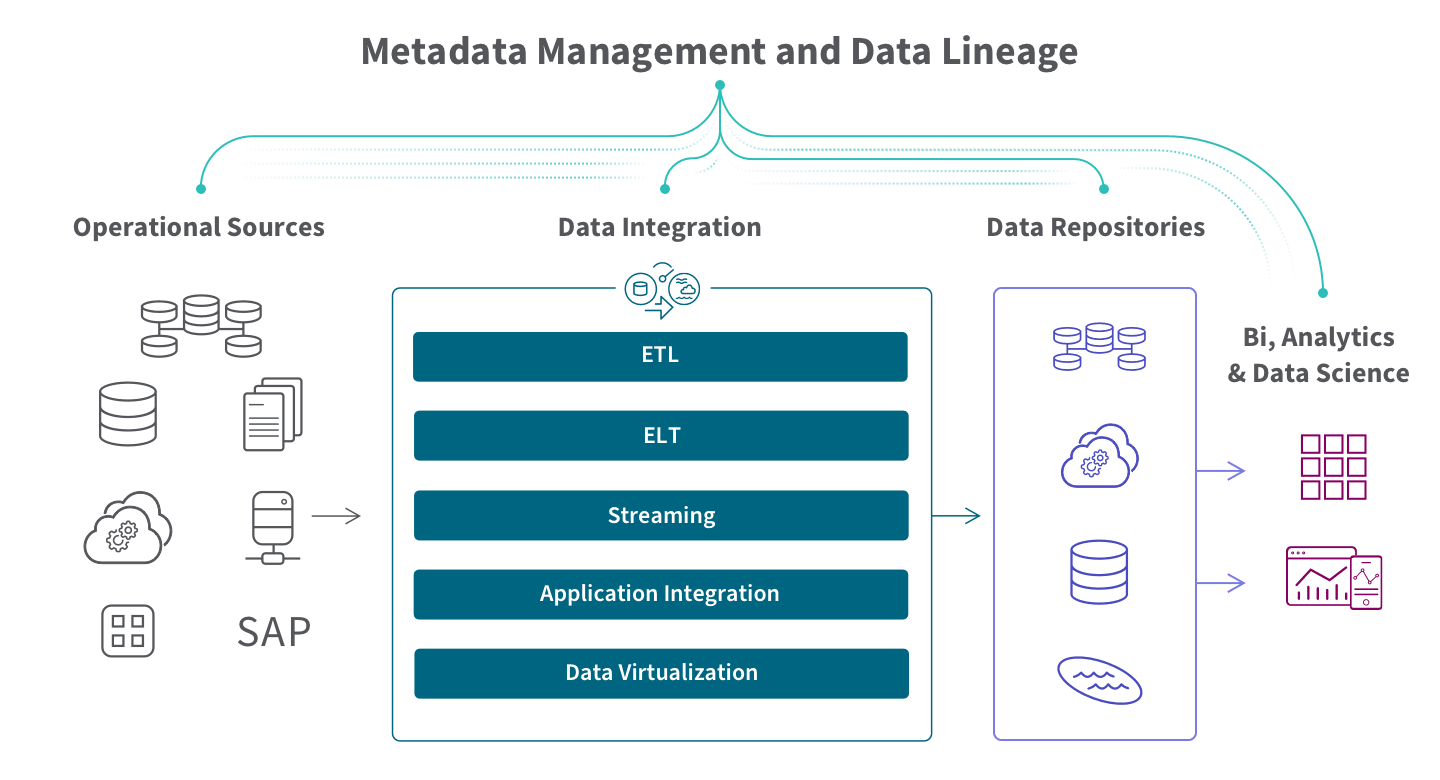

Creating Data Pipelines

Data pipelines are automated workflows that cover the entire process from collecting raw data to processing and analyzing it. The first step in implementing DataOps is to create reliable and efficient data pipelines. These pipelines automate the extraction, cleaning, transformation, and preparation of data from different sources for analysis.

Modern data pipelines adopt Infrastructure as Code (IaC) principles. This ensures that data processing can be managed through version control systems and is reproducible.

Testing and Validation

Testing and validation play a critical role in the DataOps methodology. Different types of tests, such as data quality tests, data integrity checks, and business rule validations, increase the reliability of data processes. Automated tests ensure that changes made to data pipelines can be integrated without disrupting existing processes.

According to McKinsey’s 2024 report, organizations implementing comprehensive test strategies were able to reduce business losses from data errors by up to 40%.

Monitoring and Traceability

Continuous monitoring of data pipelines and systems enables potential problems to be proactively detected. In the DataOps approach, factors such as data flow, performance metrics, and system health are monitored in real-time. Traceability ensures that the journey of data from source to end user can be tracked transparently.

Data Governance Integration

DataOps integrates data governance principles into processes. Data privacy, security, and compliance requirements are incorporated into automated data processing. This integration ensures that organizations comply with regulations and maintain ethical standards in data use.

Benefits of DataOps

Implementing the DataOps methodology provides organizations with several important advantages. These benefits increase the effectiveness of data-driven decision-making processes while reducing operational costs.

Improved Data Quality

DataOps’ automated testing and validation mechanisms significantly improve data quality. Consistent, accurate, and up-to-date data enables more reliable analytical results. High-quality data allows decision-makers to make more accurate decisions and increases the chances of success for strategic initiatives.

Rapid Delivery Times

Automation and continuous delivery principles significantly reduce the development and distribution times of data products. Data projects that could take weeks or months in traditional approaches can be completed within days or hours with DataOps. This speed allows organizations to respond more agilely to market changes.

Cross-Team Collaboration

DataOps provides stronger collaboration among different teams in the data ecosystem. By removing barriers between data engineers, data scientists, IT operations teams, and business units, it enables a more holistic approach. This collaboration contributes to producing data solutions that are better aligned with business objectives.

Cost Efficiency

Through automation and standardization, the need for manual operations decreases and resource utilization is optimized. Reducing errors and detecting them early prevents costly correction work. DataOps practices allow organizations to achieve higher returns on their IT and data investments.

DataOps Use Cases by Industry

The DataOps methodology has various applications in different sectors. Each sector creates value by adapting DataOps to its specific needs and challenges.

DataOps in the Financial Sector

Financial institutions process large amounts of data in areas such as risk analysis, fraud detection, and customer behavior analysis. DataOps helps these organizations provide fast and reliable data analytics while complying with complex regulations.

For example, a major global bank succeeded in reducing risk assessment times by 70% by applying DataOps principles in credit risk analysis processes. Automated data pipelines and comprehensive test strategies enabled faster and more accurate risk decisions.

DataOps in the Retail Sector

Retail companies benefit from DataOps in areas such as inventory management, supply chain optimization, and personalized marketing. Real-time data analysis enables quick response to changes in customer behavior.

A retail giant’s adoption of the DataOps approach increased inventory forecast accuracy by 35%, significantly reducing inventory costs and increasing customer satisfaction.

DataOps in E-Commerce

E-commerce platforms use DataOps in areas such as user behavior analysis, product recommendations, and dynamic pricing. Being able to provide consistent and fast data analysis even during high traffic periods is critical to gaining a competitive advantage.

A popular e-commerce platform increased the accuracy of its product recommendation engine by 45% and increased time spent on page by 25% by implementing DataOps principles.

DataOps in the Manufacturing Sector

Manufacturing companies benefit from DataOps in areas such as predictive maintenance, quality control, and production line optimization. Sensor data and machine learning models are used to increase production efficiency.

A major automotive manufacturer managed to reduce production errors by 28% with DataOps implementation, saving millions of dollars in annual costs.

DataOps in Telecommunications

Telecommunications companies use DataOps in areas such as network performance optimization, customer experience improvement, and revenue loss prevention. Large-scale data processing and real-time analytics are critical to improving the quality of telecommunications services.

A telecommunications leader’s implementation of the DataOps methodology reduced network outages by 32% and significantly increased customer satisfaction.

Required Roles and Skills for DataOps

Successful implementation of the DataOps methodology requires collaboration among professionals with different skill sets. Each of these roles focuses on different aspects of the data ecosystem, ensuring a holistic approach.

Data Engineers

Data engineers are responsible for the design, development, and maintenance of data pipelines. They have expertise in ETL (Extract, Transform, Load) processes, data storage systems, and data processing technologies. In the DataOps methodology, data engineers play a critical role in creating automated and scalable data infrastructures.

Data Scientists

Data scientists extract valuable insights from data by developing analytical models. They have expertise in statistical analysis, machine learning, and data visualization. In the context of DataOps, data scientists work closely with data engineers to ensure that models are seamlessly deployed to production environments.

Business Analysts

Business analysts ensure that data strategies are aligned with business objectives. They act as a bridge between technical teams and business units to ensure that data solutions meet the needs of the business and create value. In the DataOps methodology, business analysts ensure that data products meet business requirements and respond to user needs.

DevOps Specialists

DevOps specialists have expertise in continuous integration/continuous delivery (CI/CD) processes, automation tools, and containerization. In the context of DataOps, DevOps specialists ensure that data pipelines and analytical applications are deployed reliably and efficiently.

Challenges in Implementing DataOps and Proposed Solutions

Implementing the DataOps methodology may present various challenges for organizations. Understanding and proactively addressing these challenges is critical for a successful DataOps transformation.

Organizational Challenges

Traditional organizational structures often have silos that hinder interdepartmental collaboration. The adoption of DataOps requires breaking down these silos and establishing stronger collaboration between different teams.

Proposed Solution: Creating cross-functional teams and setting common goals can help eliminate silos. Regular sharing meetings and information exchange platforms can strengthen collaboration between teams.

Technical Challenges

Existing data infrastructures and tools may not be sufficient to support DataOps principles. The integration of legacy systems and the interoperation of different technologies can create technical challenges.

Proposed Solution: Adopting a modular approach and following a phased transformation strategy can help overcome technical challenges. Modern integration methods such as APIs and microservices can facilitate the interoperation of different systems.

Change Management

DataOps is not just a technological change but also a cultural transformation. It may take time for employees to adopt new methodologies and tools, and resistance may be encountered.

Proposed Solution: Comprehensive training programs and pilot projects that clearly demonstrate the benefits of change can help the organization embrace the DataOps transformation. Identifying change leaders and sharing success stories can reduce organizational resistance.

DataOps Trends for 2025 and Beyond

As the data ecosystem continues to evolve rapidly, the future of the DataOps methodology is also taking shape. Here are some important trends that will impact DataOps in the coming years:

Artificial Intelligence Integration

Artificial intelligence and machine learning will play an increasingly important role in the automation of DataOps processes. Anomaly detection, automatic data quality improvement, and self-healing features of data pipelines will become core features of AI-powered DataOps platforms.

Data Security-Focused Approaches

With the increase in data privacy regulations, security and compliance will become an integral part of DataOps strategies. The “Security as Code” approach will ensure that security controls are automatically integrated into data processing.

Hybrid Cloud Strategies

Organizations will adopt hybrid cloud strategies that offer optimal solutions according to the needs of different data types and workloads. DataOps practices will be critical for managing the complexity of multi-cloud environments and providing consistent data processing.

Conclusion

DataOps offers a powerful methodology for managing the complexity of modern data ecosystems and accelerating data-driven innovation. By embracing DataOps principles, organizations can improve data quality, accelerate analytical processes, and obtain more valuable business insights. The DataOps methodology will continue to evolve in line with technological developments and changing business needs. Developing a DataOps strategy tailored to your organization’s unique needs will be a critical step in your digital transformation journey to maximize the potential of your data ecosystem.

References:

- Gartner, “Market Guide for DataOps Tools”, 2023

- McKinsey & Company, “The Data-Driven Enterprise: Transforming Business in the Digital Age”, 2024

- IDC, “Worldwide DataOps Software Forecast, 2023-2027”, 2023